A regulation arrives. Or an auditor. Or a new market with stricter rules. PII is a thing the application was always sloppy about, and now it is a thing the application has to be careful with. This is how PII externalization begins: as someone else’s deadline, landing on the engineering team as an initiative.

The work looks like encryption at first. It is not.

Identify

The first question is not how to encrypt. The first question is what to encrypt.

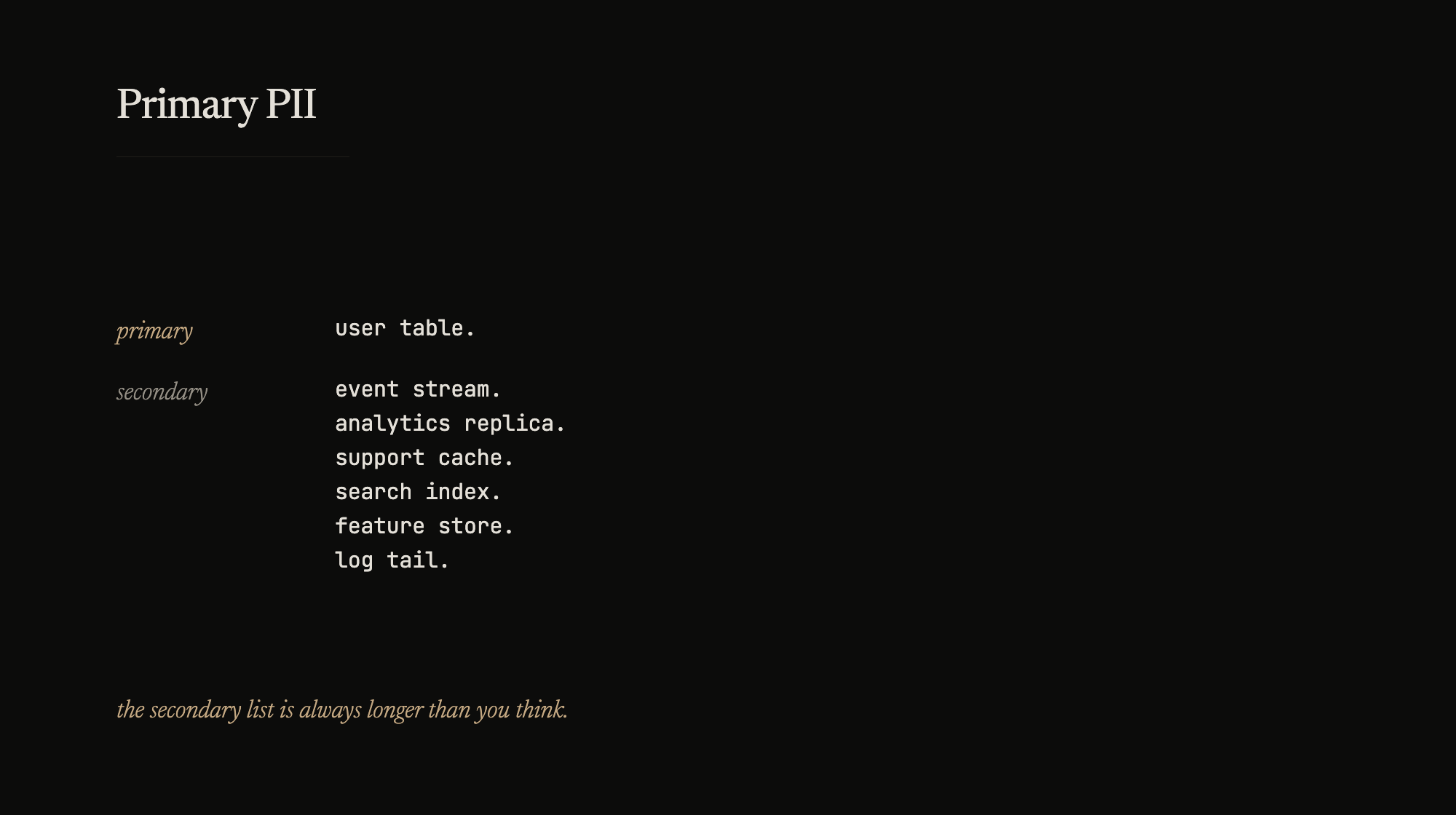

Application databases carry PII in more places than the model diagram admits. The primary PII – the canonical fields the regulation cares about – sits in the user table, sometimes in the account table, maybe in a KYC document store. That much is visible. What is not visible is the secondary copies: the event stream that captured every edit to the user table, the analytics replica that got a nightly copy, the customer-support tool that pulls by customer ID, the search index that tokenized names for typeahead, the machine learning feature store that froze email-as-string into a categorical column, the log line someone added in 2019 to debug a support ticket and forgot.

An honest audit takes weeks. Not because the work is complex; because every team has a story about a side-channel they set up to help something and never tore down. The “where does PII live” diagram has a lot more lines than the application diagram does.

Primary is the source of truth. Secondary is every projection of it. The project only has a chance if you can identify both.

Two mechanisms

Once you know where primary PII lives, you have two choices for what to do with it.

Encrypt in place. The data stays where it is – in the user table, the account table, the KYC store. Each field gets encrypted at rest, decrypted on read, through a shared encrypt/decrypt layer that every service talks to. The schema does not change in shape; values change from plaintext to ciphertext. Existing queries mostly still work, as long as the application fetches through the layer.

Isolate and tokenize. The plaintext PII moves out of the application database entirely, into a separate vault. What stays in the application table is a token – a random, stable, non-sensitive reference. Whenever a service needs the plaintext, it asks the vault and receives it back. Writes go through the vault first; reads detokenize on demand.

Both use envelope encryption underneath. Data is encrypted with a per-record data key; data keys are wrapped by a key-encryption key; the KEK is rotated centrally without re-encrypting the records. That mechanism is not what distinguishes the approaches. What distinguishes them is where the encrypted data lives, and what operations are possible without decryption.

In both cases, the schema changes underneath – plaintext becomes ciphertext or a token – but the application does not have to see that change. The model layer absorbs it. Services that read through the model get plaintext on the way out; services that write through the model send plaintext in, and the model calls the cipher layer or the vault before persistence. Application code stays mostly as it was. New operations – uniqueness by ciphertext, searches against tokens – need rewiring in the model, not at the call sites.

The access-level option

Between encryption and not storing is a third approach that is not strictly externalization. The plaintext stays in the table. Database roles decide who sees it. A role assigned to the analytics pipeline, or a support read-only account, gets masked values back; a role used by the live application gets the real thing. Tooling for this exists at the database layer – role-based masking, view-based rewrites, configuration-level controls instead of code changes.

This is not a replacement for encryption or tokenization – the data is still in plaintext on disk, and a compromised host leaks it in full. But it shrinks everyday exposure to non-application consumers without a migration. Some of the secondary-copy headaches disappear once the replica shows masked values to analytics; the primary PII still needs its proper mechanism, but the secondary surface gets smaller without a code change.

Tradeoffs

Encrypt in place is the simpler migration. The data stays in its joins. A report that selects by user ID still returns a row; the fields that matter come back encrypted, and the reporting layer decrypts on its way out. If deterministic encryption is used for specific fields, a value encrypts to the same ciphertext every time, and it can still serve as a lookup key or a uniqueness constraint. A query can ask “is this email registered?” without decryption, by encrypting the input and comparing ciphertexts. That is powerful. It is also the property that weakens the security – deterministic encryption leaks equality, which is sometimes enough for an attacker.

Tokenize and vault is the cleaner isolation. The application database holds no plaintext, which is exactly what the auditor wants to hear. But every operation that was cheap under encryption becomes a call to the vault. Uniqueness on email requires a separate layer that can answer “is this email already registered” without detokenizing – typically a deterministic hash kept alongside the token. Searching, sorting, fuzzy matching all need strategies. Bulk operations become bulk vault calls, which cost and cap.

Masking works alongside either approach, not instead of. It is the cheapest way to cut secondary-surface exposure without a migration.

There is a third axis that people do not talk about until the migration is underway. With encryption in place, a mistake in the access layer can leak plaintext to a consumer that should not see it. With tokenization, the same mistake leaks a token that is useless on its own. The blast radius of a leak is smaller with tokenization. The operational complexity is larger.

No PII is the best PII

The mechanism choice matters. It matters less than the decision that sits upstream of it.

The cheapest, most secure PII is the PII you do not hold. The second cheapest is the PII you hold briefly and scrub after use. A customer-support tool that fetches an email for the duration of a ticket, displays it, and forgets it, is less risk than the same tool with a local cache. An analytics pipeline that hashes an email at ingest and drops the original is less risk than one that encrypts it. A log line that never writes the full number is less risk than one that does, even if the log store is encrypted.

The externalization project is the moment to ask, for each piece of PII, whether storage is necessary at all. The answer is “yes” more often than we want it to be. Every “no” removes an entire column of work – no encryption, no tokenization, no access policies, no rotation, no audit. The best PII architecture has less PII.

Build, buy, both

Doing this from scratch is a project. A company with engineering capacity builds it. A company that needs the compliance story now reaches for a vendor that ships tokenization and vaulting as a service. Most teams end up doing both – the vendor for the fast path to an audit, the in-house build in parallel for control and cost over the long term. That is not failure. That is how the industry does this right now.

The system design conversation is the same either way: identify, isolate, choose the mechanism, and ask the upstream question – do we need this data at all.

The externalization project is front-heavy. The first month of identification decides what the next six months look like. The encryption and tokenization are mechanical once the right data has been identified and the right operations are mapped. A team that skips identification and starts with the mechanism rebuilds the whole thing after the first audit finds a side-channel nobody thought to check.

A team that skips the “do we even need this” question builds infrastructure for data that should not be there.