OCR on government documents works well until you look at the address fields.

State: "DKI JAKRTA"

City: "JAKRTA PUSAT"

District: "MENTNG"

Village: "MENTENG"

Dropped vowels, character substitutions, truncated names. Indonesian place names get mangled in predictable ways – ‘A’ becomes ‘R’, characters vanish mid-word. The OCR engine reads the image fine. It just can’t spell.

The problem: take these broken strings and map them back to real administrative divisions. Province, city, district, village – the hierarchy matters, and every level needs to resolve correctly.

The interface

Messy input in, clean output out. No knowledge of OCR vendors, HTTP, or databases.

type AddressSanitizer interface {

Sanitize(input AddressData) *SanitizedResult

IsReady() bool

IsHealthy() bool

}

An adapter layer upstream normalizes vendor-specific formats into AddressData before the sanitizer sees it.

Correction table

The first pass is a static map of known OCR errors. Thousands of outputs revealed that the same mistakes repeat:

corrections := map[string]string{

"jakrta": "jakarta",

"yogyakrta": "yogyakarta",

"bandng": "bandung",

"smrang": "semarang",

"jwa barat": "jawa barat",

"jwa tengah": "jawa tengah",

}

This handles the common cases. Static corrections have a ceiling, though – the variety of OCR errors outruns any hand-curated map.

Multi-strategy matching

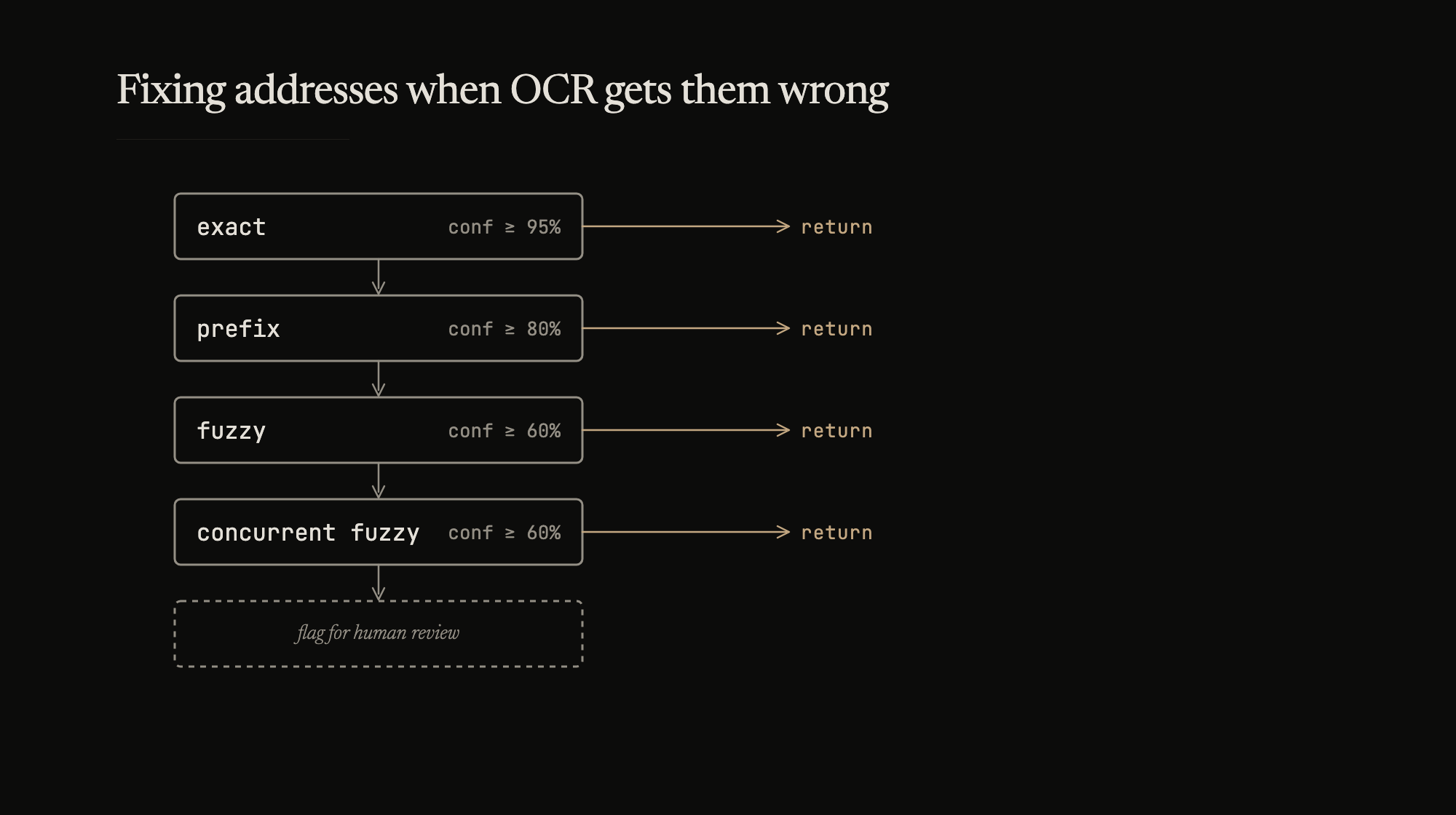

When the correction table misses, the engine tries four strategies in order of reliability. Each returns a confidence score. The first match above threshold wins.

Exact match (confidence: 100%). Hash table lookup against the reference dataset. Fast and definitive.

Prefix match (confidence: 90-95%). OCR often gets the beginning right but truncates the tail – common with longer names and edge-of-frame text. “JAKARTA PUS” resolves to “JAKARTA PUSAT”, “BANDUNG KO” to “BANDUNG KOTA”. Minimum length thresholds prevent short fragments from matching. Confidence scales with coverage:

confidence = (input_length / full_name_length) * 0.95

Fuzzy match (confidence: 60-90%). Levenshtein distance against the full reference set. Raw edit distance alone produces false positives – “JKT” is close to “JAKARTA” by edit count but shouldn’t match. The engine also checks length similarity, character overlap (60% minimum), and administrative level to filter candidates.

max_distance = max(min(len(input), len(candidate)) / 2, 1)

confidence = (max_distance - actual_distance) / max_distance * 0.9

The max() floor of 1 prevents division by zero on very short inputs.

Concurrent fuzzy (confidence: 60-90%). Same algorithm, split across goroutines for large reference datasets. 3-4x speedup. Partitioning means a worker might find a local best that isn’t the global best, but in practice the difference is rare.

func findConcurrentMatch(input string, candidates []string) Match {

numWorkers := 4

batchSize := len(candidates) / numWorkers

results := make(chan Match, numWorkers)

for i := 0; i < numWorkers; i++ {

start := i * batchSize

end := start + batchSize

if i == numWorkers-1 {

end = len(candidates) // last worker takes the remainder

}

go func(batch []string) {

results <- findBestFuzzyMatch(input, batch)

}(candidates[start:end])

}

return selectBestMatch(results)

}

Strategy selection cascades with early termination:

1. Exact match -- confidence >= 0.95 -- return

2. Prefix match -- confidence >= 0.80 -- return

3. Fuzzy match -- confidence >= 0.60 -- return

4. Concurrent -- confidence >= 0.60 -- return as alternative

5. No match -- flag for human review

Hierarchy validation

Administrative divisions form a strict tree: Province, City, District, Village. The sanitizer resolves each level independently, then validates that the results form a valid path. “MENTENG” under “JAKARTA PUSAT” is valid. “MENTENG” under “BANDUNG” is not. This catches cases where OCR reads each field correctly in isolation but the combination is geographically impossible.

Async loading

The reference dataset – millions of administrative divisions – takes seconds to load. The OCR service shouldn’t block on it.

func (s *sanitizer) Sanitize(input AddressData) *SanitizedResult {

if !s.IsReady() {

s.waitForReady(100 * time.Millisecond)

if !s.IsReady() {

return &SanitizedResult{

ValidationErrors: []string{"Reference data not yet loaded"},

IsValid: false,

Skipped: true,

}

}

}

// process normally

}

Documents processed before the data loads are marked invalid with Skipped: true – the consumer knows validation didn’t run and can retry or queue for later. Once the reference set is ready, the sanitizer picks up without a restart.

Caching

OCR errors are consistent. The same document template produces the same mistakes across thousands of scans. An LRU cache keyed on raw input plus field type hits 60-80% in production. Caching “no match” results matters too – without it, the engine re-runs all four strategies on the same unresolvable input every time.

Configuration

type SanitizerConfig struct {

CacheSize int `json:"cache_size"`

ExcellentConfidence float64 `json:"excellent_confidence"` // 0.95

GoodConfidence float64 `json:"good_confidence"` // 0.80

MinConfidence float64 `json:"min_confidence"` // 0.60

MaxEditDistance int `json:"max_edit_distance"` // 4

FuzzyWorkerCount int `json:"fuzzy_worker_count"` // 4

}

Thresholds are tunable per deployment. Above 95% is auto-accepted. 80-95% gets a spot check. Below 60% goes to human review. Different documents, different accuracy requirements.

In production

85% reduction in address validation errors. 40ms average per document across all address fields. 75% cache hit rate.

Strategy distribution: 35% exact, 25% prefix, 30% fuzzy, 8% concurrent, 2% no match. The correction table and exact matching handle the majority. Fuzzy matching is the safety net, not the primary path – which is the right inversion. You want your expensive strategy to be the fallback, not the default.

Testing with real OCR output rather than synthetic data made the difference. Real errors cluster – character substitutions concentrate around specific letter pairs, truncation follows document template layouts, noise artifacts repeat across scans of the same form type. Synthetic test cases miss these correlations entirely.

The other thing that worked: exposing confidence to the consumer. A 62% fuzzy match and a 100% exact match are not the same quality of answer. Letting the downstream system decide what to trust, rather than forcing a binary correct/incorrect, made the whole pipeline more useful.