Read something recently about students deliberately making their writing “imperfect” so AI detectors don’t flag it. Removing polish, flattening style, adding imperfections on purpose. Their work got good enough to look suspicious.

We’re doing the same thing with code reviews.

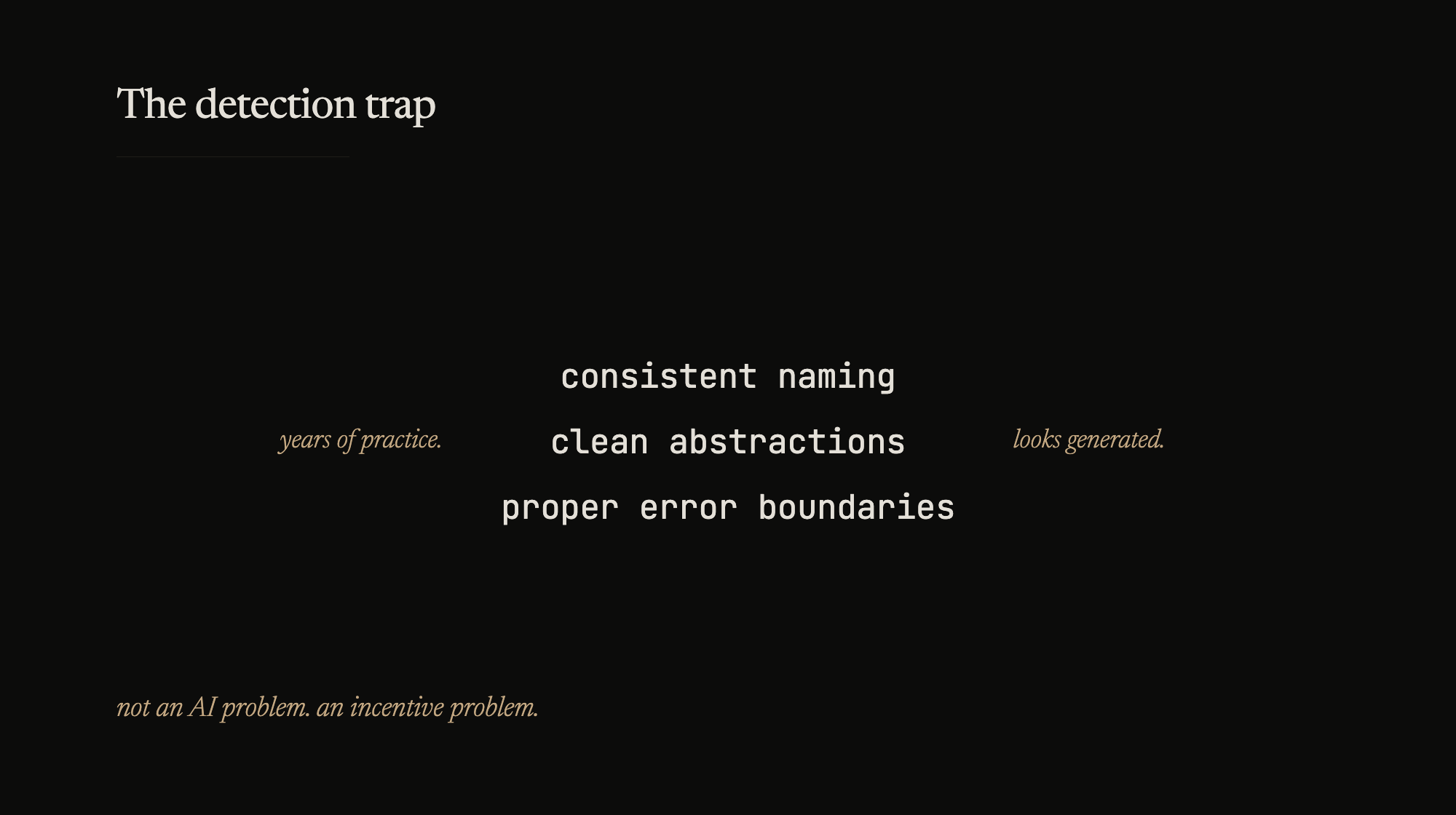

I’ve been on both sides of this. Written a clean abstraction, consistent naming, proper error boundaries, and watched someone in review go “this looks generated.” Years of caring about consistency and now consistency is the tell.

The instinct to police tools instead of evaluating output comes from the same place as managers who track keystrokes instead of reading the commit. You can’t tell quality from process. Never could.

I don’t care whether someone used Copilot. I care whether they can explain the code when I ask. I’ve worked with engineers who wrote everything by hand and couldn’t tell me why they chose that data structure. I’ve worked with engineers who scaffolded with AI and could walk me through every tradeoff. The tool is noise. The understanding is signal.

If you set up a system where quality looks suspicious, people stop producing quality. That’s not an AI problem – that’s an incentive problem. Older than any of the tools we’re arguing about.