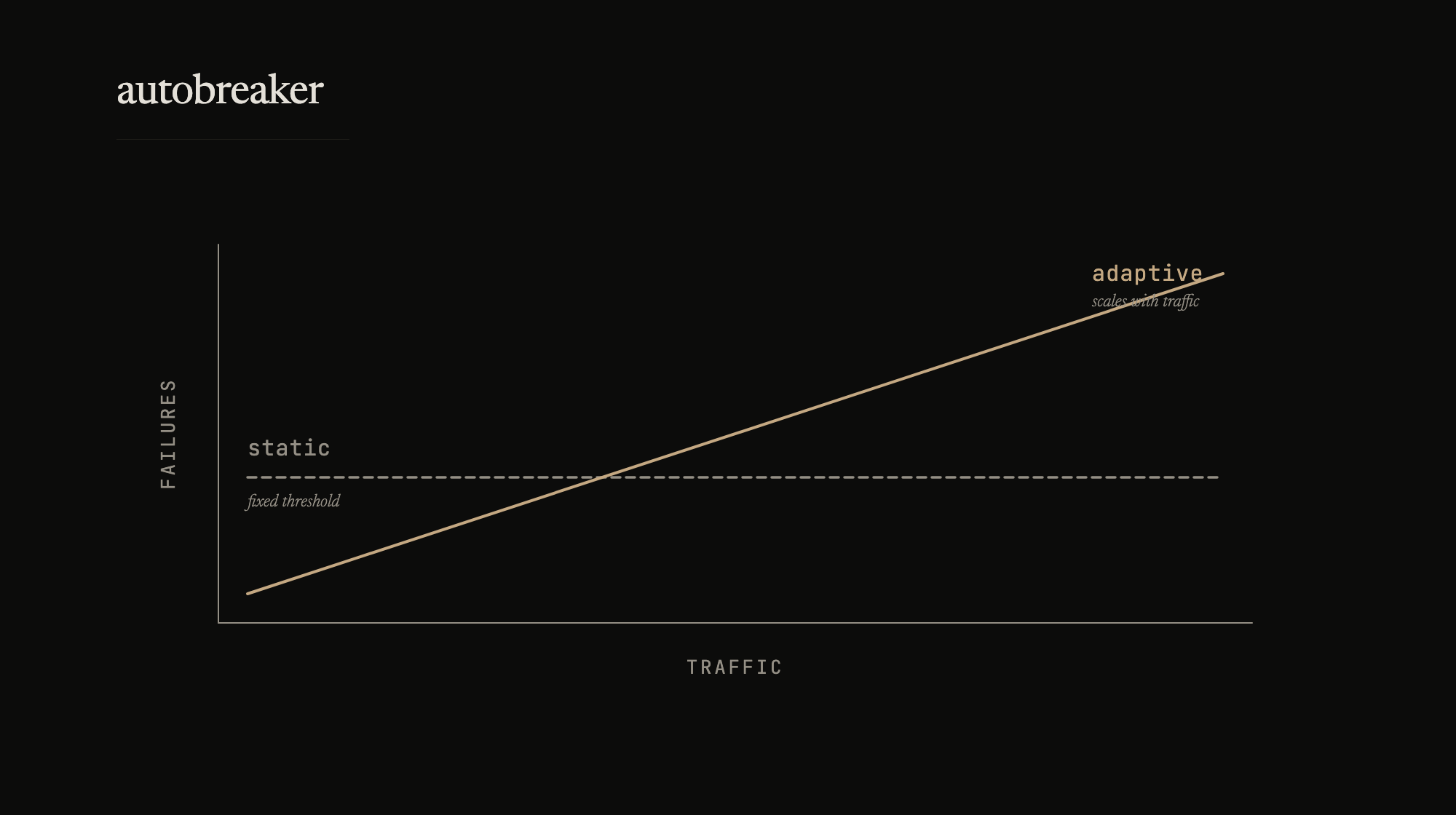

The circuit breaker post from last year used a common trigger: trip after N consecutive failures. This works when traffic is predictable. It falls apart when it’s not.

At 10,000 requests per second, 10 failures is noise – a 0.1% error rate. A static threshold trips the circuit on what’s essentially a healthy service. At 10 requests per second, 10 failures is total collapse – 100% error rate over one interval. The same threshold that false-positives under high traffic is too slow to protect under low traffic.

This is the problem autobreaker solves. Instead of counting failures, it watches the failure rate.

Percentage-based thresholds

The configuration shift is small:

breaker := autobreaker.New(autobreaker.Settings{

Name: "payment-service",

Timeout: 10 * time.Second,

AdaptiveThreshold: true,

FailureRateThreshold: 0.05, // trip at 5% failure rate

MinimumObservations: 20, // need 20 requests before evaluating

})

At 10,000 RPS with a 10-second interval, the circuit trips after 500 failures. At 10 RPS, it trips after 5. Same configuration, correct behavior at both extremes. No per-environment tuning.

MinimumObservations prevents the circuit from tripping on small samples. Two failures out of three requests is a 67% failure rate, but it’s not statistically meaningful. The threshold only activates after enough requests have been observed.

The trip logic:

func (cb *CircuitBreaker) defaultAdaptiveReadyToTrip(counts Counts) bool {

if counts.Requests < cb.getMinimumObservations() {

return false

}

failureRate := float64(counts.TotalFailures) / float64(counts.Requests)

return failureRate > cb.getFailureRateThreshold()

}

Setting AdaptiveThreshold: false falls back to the traditional consecutive-failures model, so the library covers both approaches.

Lock-free internals

The hot path – Execute in the closed state – runs under 100 nanoseconds with zero allocations. No mutexes. All state is managed through sync/atomic.

State transitions use CompareAndSwap so that exactly one goroutine wins when multiple attempt the same transition:

// Only one goroutine transitions from open to half-open

if !cb.state.CompareAndSwap(int32(StateOpen), int32(StateHalfOpen)) {

return // another goroutine already claimed the probe slot

}

The open-to-half-open transition is particularly susceptible. Multiple goroutines can observe that the timeout has elapsed and all attempt to send a probe. Without CAS, you get multiple probes – which defeats the purpose of half-open as a controlled test. The same pattern guards closed-to-open transitions.

Float64 values (like the failure rate threshold) are stored as uint64 bit patterns for atomic access. Durations are stored as int64 nanoseconds. The tradeoff is readability in the implementation, but the public API hides it behind typed accessors.

Counters saturate at math.MaxUint32 instead of wrapping. Overflow in a counter would silently corrupt the failure rate calculation. Saturation logs a warning once and stops incrementing – the rate calculation stays valid, and the operational signal is preserved.

Context-aware execution

result, err := breaker.ExecuteContext(ctx, func() (interface{}, error) {

return client.DoRequest(ctx)

})

The distinction that matters: context cancellation is not a backend failure. If the caller times out or cancels, that’s a client-side decision – it says nothing about the health of the dependency. ExecuteContext doesn’t count cancellations against the failure rate. Without this, a slow client or an aggressive timeout policy can trip the circuit even when the backend is healthy.

Runtime reconfiguration

Thresholds that work in testing don’t always work under production load. Rather than recreating the breaker (and losing state), settings can be updated atomically:

err := breaker.UpdateSettings(autobreaker.SettingsUpdate{

FailureRateThreshold: autobreaker.Float64Ptr(0.10),

Timeout: autobreaker.DurationPtr(30 * time.Second),

})

Updates are all-or-nothing – if validation fails on any field, nothing changes. Pointer fields in SettingsUpdate distinguish “not provided” from “set to zero value.” Callbacks (ReadyToTrip, OnStateChange, IsSuccessful) are immutable after construction – they define the breaker’s contract, not its tuning.

Observability

Two levels. Metrics() returns the current snapshot – state, counts, failure and success rates, timestamps. Useful for dashboards and alerting.

Diagnostics() goes further with predictions:

diag := breaker.Diagnostics()

diag.WillTripNext // true if next failure would trip the circuit

diag.TimeUntilHalfOpen // duration until probe attempt (when open)

WillTripNext is the interesting one. It evaluates the current counts against the threshold and tells you whether one more failure would trip the circuit. Useful for preemptive alerts – knowing you’re on the edge before you go over it.

Callback safety

All user-provided callbacks – ReadyToTrip, OnStateChange, IsSuccessful – are wrapped with panic recovery. A panic in ReadyToTrip returns false (don’t trip, conservative default). A panic in IsSuccessful returns false (treat as failure, conservative default). A panic in OnStateChange logs and proceeds with the transition.

This is a library boundary decision. The circuit breaker can’t trust the callbacks it’s given, and it can’t afford to crash the calling service because someone’s state-change handler had an unhandled nil pointer. The fallback behavior is always the safer option – keep the circuit running, keep requests flowing unless there’s a genuine reason to stop.

Error classification

Not all errors are backend health signals. A 400 response means the client sent a bad request – the backend is fine. A 503 means the backend is overloaded.

breaker := autobreaker.New(autobreaker.Settings{

Name: "http-client",

IsSuccessful: func(err error) bool {

if err == nil {

return true

}

var httpErr *HTTPError

if errors.As(err, &httpErr) {

return httpErr.StatusCode < 500

}

return false

},

})

Client errors (4xx) don’t count against the failure rate. Server errors (5xx) do. Without this distinction, a burst of bad requests from upstream can trip the circuit on a healthy dependency.

The adaptive tradeoff

Percentage-based thresholds solve the traffic-scaling problem but introduce a different sensitivity: they require a meaningful sample size. The MinimumObservations window means the circuit won’t protect against a burst of failures if traffic is very low and the window hasn’t filled yet. The traditional consecutive-failures model catches that case faster.

In practice, the services that need circuit breaking tend to be the ones with enough traffic to fill the observation window quickly. For low-traffic internal services where a handful of consecutive failures genuinely indicates a problem, the static model is still the right choice. The library supports both – AdaptiveThreshold: false gives you the traditional behavior.

The source is on GitHub. Zero dependencies, standard library only.